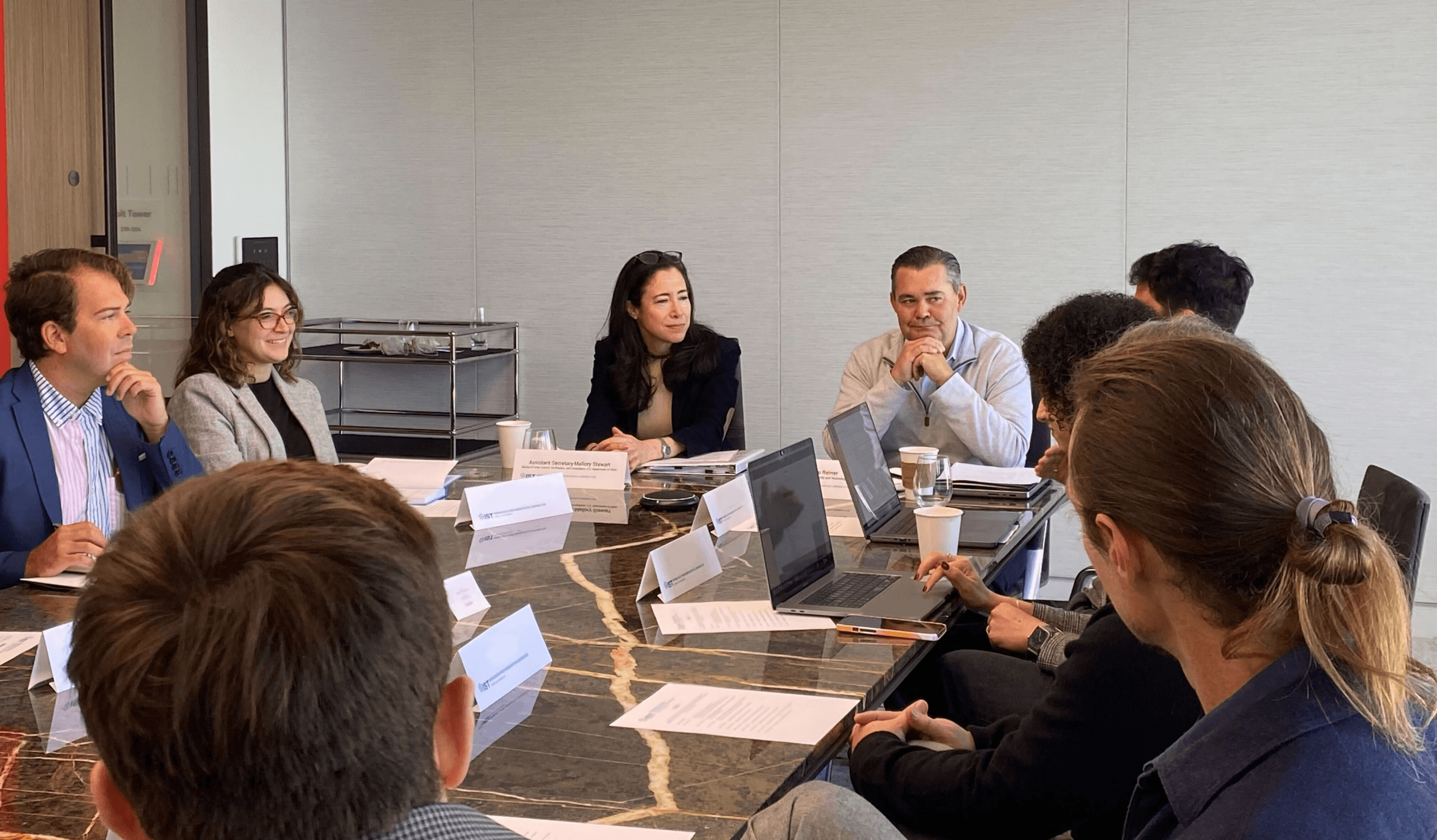

On October 25, 2023, the Institute for Security and Technology’s (IST) Innovation and Catastrophic Risk team hosted Assistant Secretary of State for Arms Control, Verification and Compliance (AVC) Mallory Stewart in San Francisco for a convening on the responsible use of artificial intelligence (AI). This private roundtable connected the U.S. Department of State with national security policy experts and private sector leaders in the Bay Area actively involved in the development and deployment of AI technologies, the research behind them, and their production at scale. The convening, held under the Chatham House rule, facilitated frank and open discussion on AI safety, alignment, security, and ideas around potential AI arms control and diplomatic confidence building measures.

Assistant Secretary Stewart is actively involved in shaping the Biden Administration’s policies on the intersection of nuclear weapons and arms control and emerging technologies, especially AI. Engagement with industry leaders is a key tenet of policy development, and interactions like this inform the State Department’s policy on responsible use of AI and autonomy.

At the roundtable, participants offered their insights, unpacked challenges and opportunities for AI in the international security context, and explored the anticipated impact of novel AI capabilities, from multi-agentic models and Large Language Models (LLMs) to generative AI.

From this convening, it is clear that there is an urgent need to collectively identify both diplomatic and technical solutions in order to move towards safe and secure AI use, as well as more resilient, collaborative, and responsible AI international governing principles. To support this goal, IST will continue to utilize our platform, act as bridge-builders, shape discourse on this important issue, and facilitate engagements among the national security and technical communities.

IST has previously worked with the Bureau of Arms Control and Verification on the integration of Artificial Intelligence with nuclear command, control, and communications (NC3) systems. Through this partnership, we conducted conversations with scientists, engineers, policymakers, and academics in both the San Francisco Bay Area and Washington, DC focused on the imperative to manage and mitigate the risks posed by AI-enabled emerging technologies. Our collaboration led to the publication of the report, AI-NC3 Integration in an Adversarial Context: Strategic Stability Risks and Confidence Building Measures, which examined the use of a suite of policy tools in the nuclear context, from unilateral AI principles and codes of conduct to multilateral consensus about the appropriate applications of AI systems and resulted in a series of proposed Confidence Building Measures (CBMs).

This week’s convening represented a continuation of IST’s efforts to continue building bridges between industry leaders and policymakers in Washington, DC work we continue to carry forward as part of our AI and cybersecurity efforts and our working group focused on open access to AI models.